In: Proceedings of the Seventeenth Conference on Computational Natural Language Learning, Sofia, Bulgaria, pp. Luong, T., Socher, R., Manning, C.D.: Better word representations with recursive neural networks for morphology. Ling, S., Song, Y., Roth, D.: Word embeddings with limited memory. Li, X.L., Eisner, J.: Specializing word embeddings (for parsing) by information bottleneck.

Joulin, A., Grave, E., Bojanowski, P., Douze, M., Jégou, H., Mikolov, T.: FastText.zip: compressing text classification models. Hill, F., Reichart, R., Korhonen, A.: SimLex-999: evaluating semantic models with (genuine) similarity estimation.

In: Proceedings of SIGIR, Paris, France, pp. Hansen, C., Hansen, C., Simonsen, J.G., Alstrup, S., Lioma, C.: Unsupervised neural generative semantic hashing. In: Proceedings of EACL, Valencia, Spain, vol. Grave, E., Mikolov, T., Joulin, A., Bojanowski, P.: Bag of tricks for efficient text classification. Gerz, D., Vulic, I., Hill, F., Reichart, R., Korhonen, A.: SimVerb-3500: a large-scale evaluation set of verb similarity. 1491–1500 (2015)įinkelstein, L., et al.: Placing search in context: the concept revisited. ACL (2019)įaruqui, M., Tsvetkov, Y., Yogatama, D., Dyer, C., Smith, N.A.: Sparse overcomplete word vector representations. In: Proceedings of NAACL: Human Language Technologies. 853–862 (2018)ĭevlin, J., Chang, M.W., Lee, K., Toutanova, K.: BERT: pre-training of deep bidirectional transformers for language understanding. 49, 1–47 (2014)Ĭhen, T., Min, M.R., Sun, Y.: Learning k-way d-dimensional discrete codes for compact embedding representations.

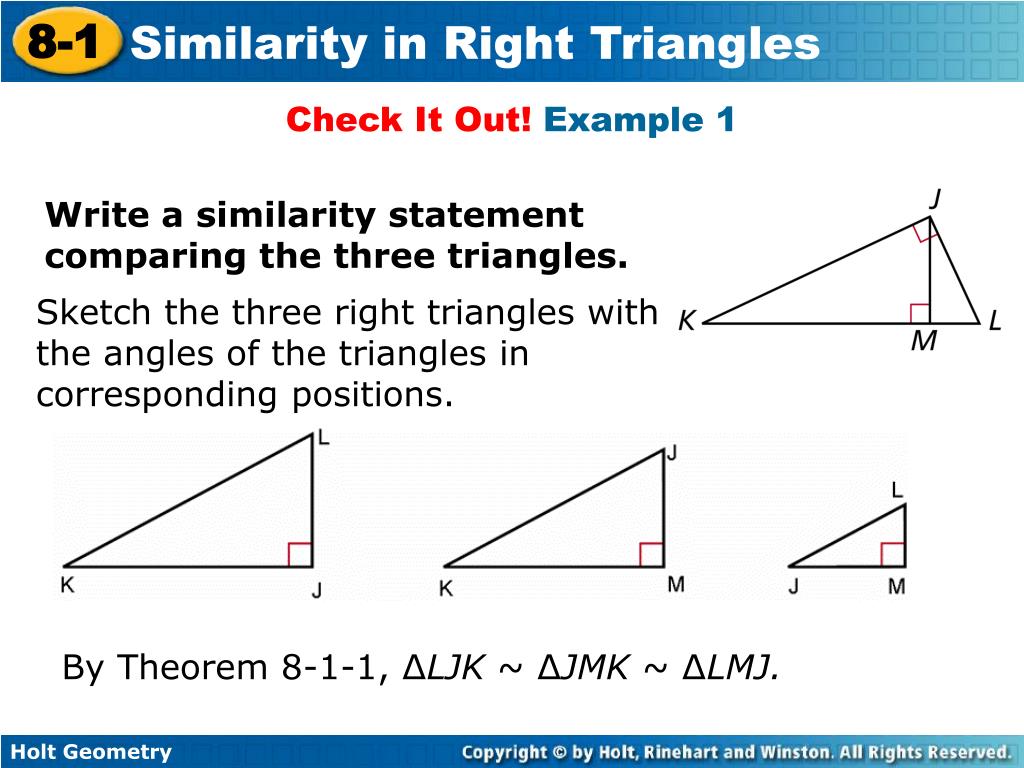

TACL 5, 135–146 (2017)īruni, E., Tran, N., Baroni, M.: Multimodal distributional semantics. Keywordsīojanowski, P., Grave, E., Joulin, A., Mikolov, T.: Enriching word vectors with subword information. Detailed analysis and ablation study further validate the rationality and the robustness of the RTST method. When the compression rate reaches 2.7%, the performance drop is only 1.2%. Experimental results on semantic similarity tasks reveal that the vector size is 64% of the original, while the performance is improved by 1.8%. RTST also maintains the relative order of each edge (vector norm) in triangles. The orthogonality in right triangles is beneficial to the compressed space construction. It is distinguishable from other methods. The essence of this method is the right triangle similarity transformation (RTST), which is a combination of manifold learning and neural networks. Finally, we extract its shared body as a compressor. The neural network is trained by minimizing the mean square error of the three internal angles between the two triangles. We get two vector triplets by adding the subtraction results of the vector pairs, respectively, which can be regarded as two triangles. Then these vector pairs are fed into a neural network sharing weights (i.e., Siamese network), and low-dimensional forms of the vector pairs are obtained. We sample a set of orthogonal vector pairs from word embedding matrix in advance. To address the problem, this paper proposes a new method for compressing word embeddings. However, these embeddings often require a lot of storage, memory, and computation, resulting in low efficiency in NLP tasks. Word embedding technology has promoted the development of many NLP tasks.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed